four note friday 2.15 | Photovoice and AI Voice

A few posts ago, I wrote about the possibility of a second edition of my book on the photovoice methodology. I outlined several topics that now need consideration for edition two. Generative AI was one such topic.

The pre-work for that post led me to discover AI Voice, outlined in this article, a version of photovoice wherein participants use AI tools to generate images rather than cameras to generate photographs.

Within this post, I detail four take-aways from that article as a starting point for thinking about how to write about AI in the context of the photovoice methodology.

Before diving into the take-aways, let me provide a brief overview of the paper on which this post is based. Jason Hughes, Linda Homan, Michelle O'Reilly, and Kahryn Highes wrote a piece called "AI Voice Methodology: Using Generative AI in Qualitative Social Research," which was published in Qualitative Inquiry in 2025. The authors introduce AI Voice as a new methodology and depicts the use of collaborative AI image generation among teens as a precursor to enter discussions about sensitive topics such as coping and adjustment behaviors (p. 3).

As with most adaptations of the photovoice methodology, these researchers were trying to address a data collection issue (one that came up within Homan's dissertation work), which resulted in methodological innovation.

Both photovoice and photo elicitation were considered as early possible approaches to understanding young peoples' perspective. But it was deemed that using photovoice "would have raised resource, practical, and above all, ethical concerns—particularly given the sensitive character of the topic, the age of participants, and the issues relating to privacy, etc.—that fell beyond the scope of the study" (p. 3).

Relatedly, photo elicitation was ruled out because of how difficult it was for the researchers to generate photographs that matched the depictions in their minds' eyes.

The team subsequently "experimented with ChatGPT’s image-generation functionality as a possible source of visual elicitation materials to use in focus groups with the young people. [They] soon were able to produce some remarkably photorealistic images that, [they] felt, might be suitable for the purposes of the research" (p. 4).

Once the team realized the efficacy of generative AI's image-creating capacity, they decided to invite "participants to co-generate images themselves and to use these as a basis for reflection and discussion" (p. 5).

Okay. So what are my take-aways here?

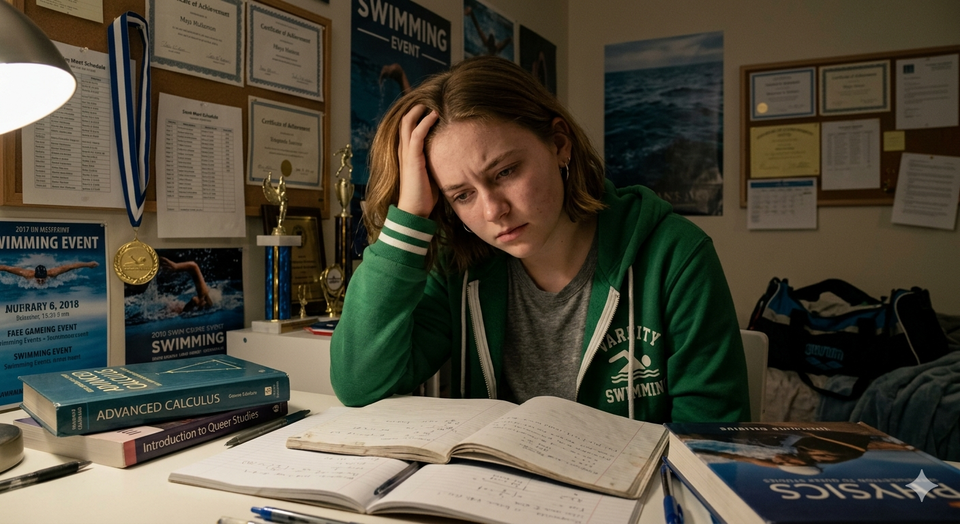

[Quick sidebar. The image above was created using Gemini, which the authors note as being optimal for this type of work (see page 8). It was generated from the following prompt: Please create a photo realistic image of a young high school-aged girl who is struggling with her sexuality. As a result, she becomes a hyper achiever in the domains of sport and academics to compensate.

Alternatively, when I tried another prompt, one more aligned with the Hughes et al. (2025) study, at least in my estimation, I got an interesting reply from the platform.

Prompt: Create a photorealistic image of a teenage girl who is struggling with body image, online bullying, and mental health.

Gemini: I can create images for you, but not ones that depict minors like that. Can I help with a different image instead?

I found it interesting that "struggling with sexuality" does not raise caution in the same way that words like struggling with body image, online bullying, and mental health do. This built-in bias[?] could likely impede this kind of work.]

AI Voice Is More Like Photo Elicitation than Photovoice

While the authors argue that their "approach was much closer to the photovoice than the photo elicitation type model" (p. 5). I disagree. There was not enough evidence in the paper to substantiate this claim. AI Voice was much more like a method than a methodology. It is emblematic of participatory photo elicitation. It should, therefore, be called generative AI (or GenAI) image elicitation. Unlike photovoice, it seems data generation is where the participatory nature of the approach ends.

There was no mention of theoretical underpinnings, project aims, or dissemination strategies that included an exhibition (broadly defined). No mention of policy or social change were included. While reflexive thematic analysis was noted as the guiding data analysis framework, which could be leveraged alongside photovoice, too much of the process diverged from photovoice for me to get on board with the above claim.

However, later in the piece the authors stated that "AI Voice operates through fundamentally different routes and channels than traditional photovoice methodology" (p. 13). I can get on board with this.

More Attention to Theory Is Needed Within AI Voice

While the paper was theoretically dense, there was no attention to the theoretical underpinnings of photovoice or how those underpinnings would need reworking in the pursuit if AI Voice as a methodology adapted from photovoice. In most adaptations of photovoice, theory is attended to, which keeps the various components of the methodology aligned throughout application. Theory should not be relegated to an afterthought.

Not All Photovoice Projects Can be Turned into AI Voice Projects

This take-away reminds me of an earlier post I wrote about Strack et al.'s (2022) piece on photovoice classifications within the social ecological model (SEM). Not all photovoice projects are the same. Strack et al.'s piece divides photovoice projects into four classifications: photovoice as (a) photovention, (b) community assessment, (c) community capacity (building), and (d) advocacy.

Taking the above framework from Strack et al. (2022) as a guide, AI Voice would only work well as a photovention. Photoventions are typically focused on the experiences of a group of individuals with a shared experience, not necessarily a shared community comprised of many different individuals. For example, it would be tough—though not impossible—to understand a neighborhood's strengths and weaknesses using AI-generated images. It would be a lot easier to use AI Voice to understand how people make sense of a specific major life transition or tell stories about flourishing at work.

Burner Accounts, Epistemic Foils, Productive Distance, and Artificial Representation

Some fascinating new-to-me terms were at play within this article, which I intend to think on and learn about further. Here is an overview:

- Burner Accounts. Participants in this study were asked to create ChatGPT burner accounts to use during participation. These accounts were to be separate from any other ChatGPT accounts they may have had. In a sense, they were throw away accounts, to be used during the study and then discarded.

- Epistemic Foils. The images generated during the study were termed epistemic foils, though this term was never explicitly defined within the paper. The term refers to the idea that the AI images generated were photorealistic but not real. Yet the images also conveyed a hyperreality, emphasizing emotions, contexts, and details, which can serve as both a mirror and a contrast to the actual realities and/or feelings of the participants.

- Productive Distance. That the images served as epistemic foils allowed for the participants to distance themselves from the images in an epistemically productive way. This productive distance allowed focus group participants to focus on the images, not necessarily themselves directly, which paved a way forward for the conversation devoid of embarrassment, fear of over-disclosure, or vulnerability.

- Artificial Representation. While it could be argued that photovoice projects include always include some level of artificial representation (e.g., What is not in the frame? What is avoided?), the use of generative AI within AI Voice projects is artificial representation. But this is not all bad. In fact, the authors stated that the inception of AI Voice signals "new possibilities for qualitative research to harness collaborative reflections while maintaining a degree of individual privacy and emotional safety" (p. 16). Furthermore, they "suggest that this might be particularly valuable when working with young people on sensitive topics where the productive gap between artificial representation and lived experience opens methodologically rich spaces for exploration and understanding" (p. 16).

What do you think? I would love to learn about readers' thoughts and ideas about the things I write about. Is AI Voice the future of photovoice and photovoice-adjacent inquiry?

🥹 Thanks for spending a moment with me this Friday.

💌 If you’re new here, welcome! I hope this space becomes one you look forward to each week.

📬 Have a question you want me to answer in a future issue? Reach me at photovoicefieldnotes@gmail.com. I'd love to hear from you.

Thanks for being here.

Warmly,

Mandy

photovoice field notes

photovoicefieldnotes.com

Member discussion